Documents

Poster

Identifying Attack-Specific Signatures in Adversarial Examples

- DOI:

- 10.60864/4m2m-ps69

- Citation Author(s):

- Submitted by:

- Hossein Souri

- Last updated:

- 15 April 2024 - 11:40pm

- Document Type:

- Poster

- Document Year:

- 2024

- Event:

- Presenters:

- Hossein Souri

- Categories:

- Log in to post comments

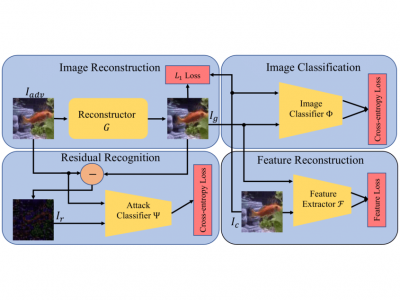

The adversarial attack literature contains numerous algorithms for crafting perturbations which manipulate neural network predictions. Many of these adversarial attacks optimize inputs with the same constraints and have similar downstream impact on the models they attack. In this work, we first show how to reconstruct an adversarial perturbation, namely the difference between an adversarial example and the original natural image, from an adversarial example. Then, we classify reconstructed adversarial perturbations based on the algorithm that generated them. This pipeline, REDRL, can detect the attack algorithm used to generate a sample from only the sample itself. The ability to determine which algorithm generated an example implies that different attack algorithms actually produce unique signatures in their adversarial examples.