Documents

Presentation Slides

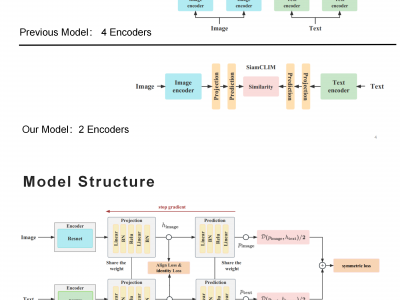

SiamCLIM: Text-Based Pedestrian Search via Multi-modal Siamese Contrastive Learning

- DOI:

- 10.60864/zb04-s530

- Citation Author(s):

- Submitted by:

- Runlin Huang

- Last updated:

- 17 November 2023 - 12:05pm

- Document Type:

- Presentation Slides

- Document Year:

- 2023

- Event:

- Presenters:

- Runlin Huang

- Categories:

- Log in to post comments

Text-based pedestrian search (TBPS) aims at retrieving target persons from the image gallery through descriptive text queries. Despite remarkable progress in recent state-of-the-art approaches, previous works still struggle to efficiently extract discriminative features from multi-modal data. To address the problem of cross-modal fine-grained text-to-image, we proposed a novel Siamese Contrastive Language-Image Model (SiamCLIM). The model implements textual description and target-person interaction through deep bilateral projection, and siamese network structure to capture the relationship between text and image. Experiments show that our model significantly outperforms the state-of-the-art methods on cross-modal fine-grained matching tasks. We conduct the downstream task experiments on the benchmark dataset CUHK-PEDES and the experimental results demonstrate that our model is state-of-the-art and outperforms the current methods by 11.55%, 11.02%, and 7.76% in terms of top-1, top-5, and top-10 accuracy,