IEEE ICIP 2023 - The International Conference on Image Processing (ICIP), sponsored by the IEEE Signal Processing Society, is the premier forum for the presentation of technological advances and research results in the fields of theoretical, experimental, and applied image and video processing. ICIP has been held annually since 1994, brings together leading engineers and scientists in image and video processing from around the world. Visit website.

- Read more about STRENGTHENING DEEP LEARNING MODEL FOR ROBUST SCREENING OF VOLUMETRIC CHEST RADIOGRAPHIC SCANS

- 1 comment

- Log in to post comments

The emerging deep learning algorithms have shown significant potential in the development of efficient computer aided diagnosis tools for automated detection of lung infections using chest radiographs. However, many existing methods are slice-based and require manual selection of appropriate slices from the entire CT scan, which is tedious and requires expert radiologists.

- Categories:

16 Views

16 Views

- Read more about SegGuard: Defending Scene Segmentation against Adversarial Patch Attack - Supplementary Material

- Log in to post comments

Adversarial Patch Attacks (APAs) induce prediction errors by inserting carefully crafted regions into images. This paper presents the first defence against APAs for deep networks that perform semantic segmentation of scenes. We show that a conditional generator can be trained to produce patches on demand targeting specific classes and achieving superior performance versus conventional pixel-optimised patch attacks.

- Categories:

33 Views

33 Views

- Read more about MAP-informed Unrolled Algorithms for Hyper-parameter Estimation

- Log in to post comments

Hyper-parameter tuning, and especially regularisation parameter estimation, is a challenging but essential task when solving inverse problems. The solution is obtained here through the minimization of a functional composed of a data fidelity term and a regularization term. Those terms are balanced through a (or several) regularisation parameter(s) whose estimation is made under an unrolled strategy together with the inverse problem solving. The resulting network is

ICIP_oral.pdf

- Categories:

46 Views

46 Views

- Read more about DEEP UNFOLDING NETWORK WITH PHYSICS-BASED PRIORS FOR UNDERWATER IMAGE ENHANCEMENT

- Log in to post comments

We propose an underwater image enhancement algorithm that leverages both model- and learning-based approaches by unfolding an iterative algorithm. We first formulate the underwater image enhancement task as a joint optimization problem, based on the image formation model with physical model and underwater-related priors. Then, we solve the optimization problem iteratively. Finally, we unfold the iterative algorithm so that, at each iteration, the optimization variables and regularizers for image priors are updated by closed-form solutions and learned deep networks, respectively.

- Categories:

81 Views

81 Views

We address distinguishing whether an input is a facial image by learning only a facial-expression recognition (FER) dataset.

- Categories:

66 Views

66 Views

We address distinguishing whether an input is a facial image by learning only a facial-expression recognition (FER) dataset.

- Categories:

33 Views

33 Views

- Read more about Feature integration via back-projection ordering multi-modal Gaussian process latent variable model for rating prediction

- Log in to post comments

In this paper, we present a method of feature integration via backprojection ordering multi-modal Gaussian process latent variable model (BPomGP) for rating prediction. In the proposed method, to extract features reflecting the users’ interest, we use the known ratings assigned to the viewed contents and users’ behavior information while viewing the contents which is related to the users’ interest. BPomGP has two important approaches. Unlike the training phase, where the above two types of heterogeneous information are available, behavior information is not given in the test phase.

- Categories:

38 Views

38 Views

- Read more about Multi-view variational recurrent neural network for human emotion recognition using multi-modal biological signals

- Log in to post comments

In this paper, the Multi-view Variational Recurrent Neural Network (MvVRNN) is proposed for multi-modal human emotion recognition with gaze and brain activity data while humans view images. For realizing accurate emotion recognition, we focus on the following three characteristics of biological signals: 1) the relationship between implicit and explicit information such as gaze and brain activity data, 2) the temporal changes related to human emotions and 3) the effects of noises that can be included during data acquisition.

- Categories:

88 Views

88 Views

- Read more about SANDWICHED VIDEO COMPRESSION: EFFICIENTLY EXTENDING THE REACH OF STANDARD CODECS WITH NEURAL WRAPPERS

- Log in to post comments

We propose sandwiched video compression – a video compression

system that wraps neural networks around a standard video codec.

The sandwich framework consists of a neural pre- and post-processor

with a standard video codec between them. The networks are trained

jointly to optimize a rate-distortion loss function with the goal of significantly improving over the standard codec in various compression

scenarios. End-to-end training in this setting requires a differentiable

proxy for the standard video codec, which incorporates temporal

- Categories:

38 Views

38 Views

- Read more about Pairwise Feature Learning for Unseen Plant Disease Recognition

- Log in to post comments

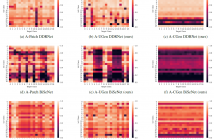

With the advent of Deep Learning, people have begun to use it with computer vision approaches to identify plant diseases on a large scale targeting multiple crops and diseases. However, this requires a large amount of plant disease data, which is often not readily available, and the cost of acquiring disease images is high. Thus, developing a generalized model for recognizing unseen classes is very important and remains a major challenge to date. Existing methods solve the problem with general supervised recognition tasks based on the seen composition of the crop and the disease.

- Categories:

40 Views

40 Views