Documents

Poster

TOWARDS THINNER CONVOLUTIONAL NEURAL NETWORKS THROUGH GRADUALLY GLOBAL PRUNING

- Citation Author(s):

- Submitted by:

- Zhengtao Wang

- Last updated:

- 13 September 2017 - 12:37pm

- Document Type:

- Poster

- Document Year:

- 2017

- Event:

- Presenters:

- Zhengtao Wang

- Paper Code:

- ICIP1701

- Categories:

- Log in to post comments

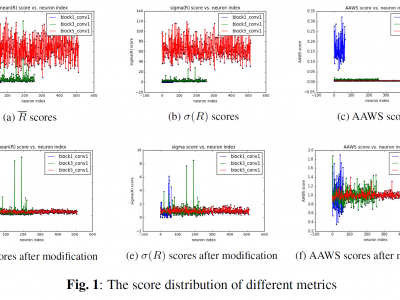

Deep network pruning is an effective method to reduce the storage and computation cost of deep neural networks when applying them to resource-limited devices. Among many pruning granularity, neuron level pruning will remove redundant neurons and filters in the model and result in thinner networks. In this paper, we propose a gradually global pruning scheme for neuron level pruning. In each pruning step, a small percent of neurons were selected and dropped across all layers in the model. We also propose a simple method to eliminate the biases in evaluating the importance of neurons to make the scheme feasible. Compared with layer-wise pruning scheme, our scheme avoid the difficulty in determining the redundancy in each layer and is more effective for deep networks. Our scheme would automatically find a thinner sub-network in original network under a given performance.