Documents

Poster

LEARNING TEMPORAL INFORMATION FROM SPATIAL INFORMATION USING CAPSNETS FOR HUMAN ACTION RECOGNITION

- Citation Author(s):

- Submitted by:

- Abdullah Algamdi

- Last updated:

- 8 May 2019 - 8:03am

- Document Type:

- Poster

- Document Year:

- 2019

- Event:

- Presenters:

- Abdullah Algamdi

- Paper Code:

- 2503

- Categories:

- Keywords:

- Log in to post comments

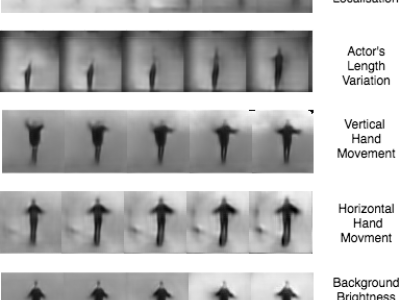

Capsule Networks (CapsNets) are recently introduced to overcome some of the shortcomings of traditional Convolutional Neural Networks (CNNs). CapsNets replace neurons in CNNs with vectors to retain spatial relationships among the features. In this paper, we propose a CapsNet architecture that employs individual video frames for human action recognition without explicitly extracting motion information. We also propose weight pooling to reduce the computational complexity and improve the classification accuracy by appropriately removing some of the extracted features. We show how the capsules of the proposed architecture can encode temporal information by using the spatial features extracted from several video frames. Compared with a traditional CNN of the same complexity, the proposed CapsNet improves action recognition performance by 12.11% and 22.29% on the KTH and UCF-sports datasets, respectively.