Documents

Poster

PROBMCL: SIMPLE PROBABILISTIC CONTRASTIVE LEARNING FOR MULTI-LABEL VISUAL CLASSIFICATION

- DOI:

- 10.60864/dwc7-he34

- Citation Author(s):

- Submitted by:

- Ahmad Sajedi

- Last updated:

- 6 June 2024 - 10:50am

- Document Type:

- Poster

- Document Year:

- 2024

- Event:

- Presenters:

- Ahmad Sajedi

- Paper Code:

- MLSP-P38.7

- Categories:

- Log in to post comments

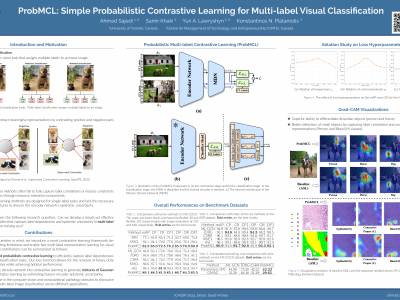

Multi-label image classification presents a challenging task in many domains, including computer vision and medical imaging. Recent advancements have introduced graph-based and transformer-based methods to improve performance and capture label dependencies. However, these methods often include complex modules that entail heavy computation and lack interpretability. In this paper, we propose Probabilistic Multi-label Contrastive Learning (ProbMCL), a novel framework to address these challenges in multi-label image classification tasks. Our simple yet effective approach employs supervised contrastive learning, in which samples that share enough labels with an anchor image based on a decision threshold are introduced as a positive set. This structure captures label dependencies by pulling positive pair embeddings together and pushing away negative samples that fall below the threshold. We enhance representation learning by incorporating a mixture density network into contrastive learning and generating Gaussian mixture distributions to explore the epistemic uncertainty of the feature encoder. We validate the effectiveness of our framework through experimentation with datasets from the computer vision and medical imaging domains. Our method outperforms the existing state-of-the-art methods while achieving a low computational footprint on both datasets. Visualization analyses also demonstrate that ProbMCL-learned classifiers maintain a meaningful semantic topology.