Documents

Presentation Slides

Sketching for Large-Scale Learning of Mixture Models

- Citation Author(s):

- Submitted by:

- Nicolas Keriven

- Last updated:

- 21 March 2016 - 4:00am

- Document Type:

- Presentation Slides

- Document Year:

- 2016

- Event:

- Presenters:

- Nicolas Keriven

- Paper Code:

- 1365

- Categories:

- Log in to post comments

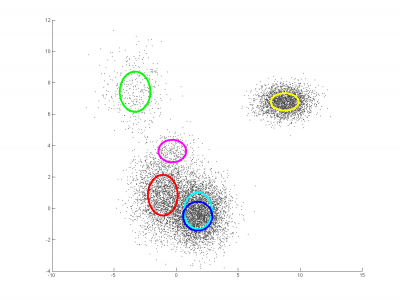

Learning parameters from voluminous data can be prohibitive in terms of memory and computational requirements. We propose a "compressive learning" framework where we first sketch the data by computing random generalized moments of the underlying probability distribution, then estimate mixture model parameters from the sketch using an iterative algorithm analogous to greedy sparse signal recovery. We exemplify our framework with the sketched estimation of Gaussian Mixture Models (GMMs). We experimentally show that our approach yields results comparable to the classical Expectation-Maximization (EM) technique while requiring significantly less memory and fewer computations when the number of database elements is large. We report large-scale experiments in speaker verification, where our approach makes it possible to fully exploit a corpus of 1000 hours of speech signal to learn a universal background model at scales computationally inaccessible to EM.