ICASSP is the world's largest and most comprehensive technical conference on signal processing and its applications. It provides a fantastic networking opportunity for like-minded professionals from around the world. ICASSP 2016 conference will feature world-class presentations by internationally renowned speakers and cutting-edge session topics.

- Read more about Complex NMF under phase constraints based on signal modeling

- Log in to post comments

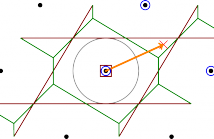

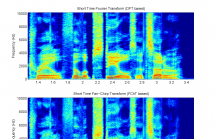

Nonnegative Matrix Factorization (NMF) is a powerful tool for decomposing mixtures of audio signals in the Time-Frequency (TF) domain. In the source separation framework, the phase recovery for each extracted component is necessary for synthesizing time-domain signals. The Complex NMF (CNMF) model aims to jointly estimate the spectrogram and the phase of the sources, but requires to constrain the phase in order to produce satisfactory sounding results.

icassp2016_slides.pdf

- Categories:

10 Views

10 Views- Categories:

6 Views

6 Views

- Read more about Improved Decoding of Analog Modulo Block Codes for Noise Mitigation

- Log in to post comments

A drawback of digital transmission of analog signals is the unavoidable quantization error which leads to a limited quality even for good channel conditions.

This saturation can be avoided by using analog transmission systems with discrete-time and quasi-continuous-amplitude encoding and decoding, e.g., Analog Modulo Block codes (AMB codes). The AMB code vectors are produced by multiplying a real-valued information vector with a real-valued generator matrix using a modulo arithmetic.

- Categories:

17 Views

17 Views- Read more about Matlab Program for Wireless Powered Two Way Relay Channel (ICASSP 2016)

- Log in to post comments

This Matlab program covers two optimization algorithms, namely semidefinite programming and block coordinate descent method, for wireless powered two way relay channel.

The first algorithm is proposed in:

[1] S. Wang, Y.-C. Wu, and M. Xia, ``Achieving global optimality in wirelessly-powered multi-antenna TWRC with lattice codes,'' in Proc. IEEE ICASSP'16, Shang Hai, China, Mar. 2016, pp. 3556-3560.

The second algorithm is proposed in

- Categories:

121 Views

121 Views- Read more about Linearly Augmented Deep Neural Network

- Log in to post comments

Deep neural networks (DNN) are a powerful tool for many large vocabulary continuous speech recognition (LVCSR) tasks. Training a very deep network is a challenging problem and pre-training techniques are needed in order to achieve the best results. In this paper, we propose a new type of network architecture, Linear Augmented Deep Neural Network (LA-DNN). This type of network augments each non-linear layer with a linear connection from layer input to layer output.

- Categories:

16 Views

16 Views- Read more about Low-rank Matrix Recovery via Entropy Function

- Log in to post comments

The low-rank matrix recovery problem consists of reconstructing an unknown low-rank matrix from a few linear measurements, possibly corrupted by noise. One of the most popular method in low-rank matrix recovery is based on nuclear-norm minimization, which seeks to simultaneously estimate the most significant singular values of the target low-rank matrix by adding a penalizing term on its nuclear norm. In this paper, we introduce a new method that re- quires substantially fewer measurements needed for exact matrix recovery compared to nuclear norm minimization.

- Categories:

37 Views

37 Views

- Categories:

15 Views

15 Views- Read more about Performance Analysis for Pilot-based 1-bit Channel Estimation with Unknown Quantization Threshold

- Log in to post comments

Parameter estimation using quantized observations is of importance in many practical applications. Under a symmetric 1-bit setup, consisting of a zero-threshold hard limiter, it is well known that the large sample performance loss for low signal-to-noise ratios (SNRs) is moderate (2/pi or -1.96dB). This makes low-complexity analog-to-digital converters (ADCs) with 1-bit resolution a promising solution for future wireless communications and signal processing devices.

- Categories:

65 Views

65 Views- Read more about EXPLOITING LSTM STRUCTURE IN DEEP NEURAL NETWORKS FOR SPEECH RECOGNITION

- Log in to post comments

- Categories:

12 Views

12 Views- Read more about Parallelizing WFST Speech Decoders (Poster)

- Log in to post comments

- Categories:

14 Views

14 Views