Documents

Poster

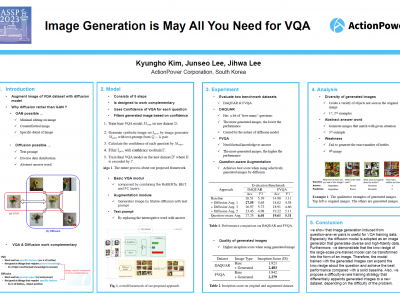

Image Generation is MAY All You Need for VQA

- Citation Author(s):

- Submitted by:

- Kyungho Kim

- Last updated:

- 19 May 2023 - 1:59am

- Document Type:

- Poster

- Document Year:

- 2023

- Event:

- Presenters:

- Kyungho Kim

- Paper Code:

- IVMSP-P9.11

- Categories:

- Log in to post comments

Visual Question Answering (VQA) stands to benefit from the boost of increasingly sophisticated Pretrained Language Model (PLM) and Computer Vision-based models. In particular, many language modality studies have been conducted using image captioning or question generation with the knowledge ground of PLM in terms of data augmentation. However, image generation of VQA has been implemented in a limited way to modify only certain parts of the original image in order to control the quality and uncertainty. In this paper, to address this gap, we propose a method that utilizes the diffusion model, pre-trained with various tasks and images, to inject the prior knowledge base into generated images and secure diversity without losing generality about the answer. In addition, we design an effective training strategy by considering the difficulty of questions to address the multiple images per QA pair and to compensate for the weakness of the diffusion model. VQA model trained on our strategy improves significant performance on the dataset that requires factual knowledge without any knowledge information in language modality.