Documents

Presentation Slides

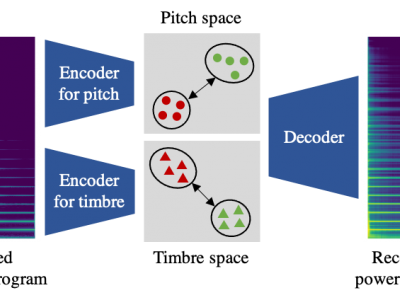

Pitch-Timbre Disentanglement of Musical Instrument Sounds Based on VAE-Based Metric Learning

- Citation Author(s):

- Submitted by:

- Keitaro Tanaka

- Last updated:

- 22 June 2021 - 1:14am

- Document Type:

- Presentation Slides

- Document Year:

- 2021

- Event:

- Presenters:

- Keitaro Tanaka

- Paper Code:

- AUD-4.6

- Categories:

- Log in to post comments

This paper describes a representation learning method for disentangling an arbitrary musical instrument sound into latent pitch and timbre representations. Although such pitch-timbre disentanglement has been achieved with a variational autoencoder (VAE), especially for a predefined set of musical instruments, the latent pitch and timbre representations are outspread, making them hard to interpret. To mitigate this problem, we introduce a metric learning technique into a VAE with latent pitch and timbre spaces so that similar (different) pitches or timbres are mapped close to (far from) each other. Specifically, our VAE is trained with additional contrastive losses so that the latent distances between two arbitrary sounds of the same pitch or timbre are minimized, and those of different pitches or timbres are maximized. This training is performed under weak supervision that uses only whether the pitches and timbres of two sounds are the same or not, instead of their actual values. This improves the generalization capability for unseen musical instruments. Experimental results show that the proposed method can find better-structured disentangled representations with pitch and timbre clusters even for unseen musical instruments.