Documents

Poster

Loss Switching Fusion with Similarity Search for Video Classification

- Citation Author(s):

- Submitted by:

- Lei Wang

- Last updated:

- 16 September 2019 - 1:04am

- Document Type:

- Poster

- Document Year:

- 2019

- Event:

- Presenters:

- Lei Wang

- Paper Code:

- 2128

- Categories:

- Log in to post comments

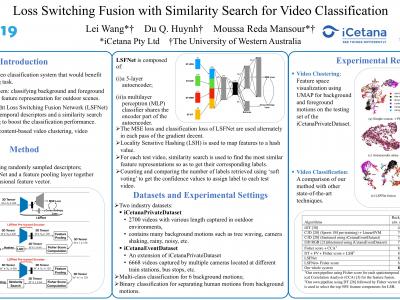

From video streaming to security and surveillance applications , video data play an important role in our daily living today. However, managing a large amount of video data and retrieving the most useful information for the user remain a challenging task. In this paper, we propose a novel video classification system that would benefit the scene understanding task. We define our classification problem as classifying background and foreground motions using the same feature representation for outdoor scenes. This means that the feature representation needs to be robust enough and adaptable to different classification tasks. We propose a lightweight Loss Switching Fusion Network (LSFNet) for the fusion of spatiotemporal descriptors and a similarity search scheme with soft voting to boost the classification performance. The proposed system has a variety of potential applications such as content-based video clustering, video filtering, etc. Evaluation results on two private industry datasets show that our system is robust in both classifying different background motions and detecting human motions from these background motions.