- Read more about Improved Deep Speaker Localization and Tracking: Revised Training Paradigm and Controlled Latency

- Log in to post comments

Even without a separate tracking algorithm, the directions of arrival (DOAs) of moving talkers can be estimated with a deep neural network (DNN) when the movement trajectories used for training allow the generalization to real signals. Previously, we proposed a framework for generating training data with time-variant source activity and sudden DOA changes. Slowly moving sources could be seen as a special case thereof, but were not explicitly modeled. In this paper, we extend this framework by using small jumps between neighboring discrete DOAs to simulate gradual movements.

poster.pdf

- Categories:

26 Views

26 Views

- Read more about Exploiting Temporal Context in CNN Based Multisource DOA Estimation

- Log in to post comments

Supervised learning methods are a powerful tool for direction of arrival (DOA) estimation because they can cope with adverse conditions where simplified models fail. In this work, we consider a previously proposed convolutional neural network (CNN) approach that estimates the DOAs for multiple sources from the phase spectra of the microphones. For speech, specifically, the approach was shown to work well even when trained entirely on synthetically generated data. However, as each frame is processed separately, temporal context cannot be taken into account.

- Categories:

22 Views

22 Views

- Read more about A DOUBLE-CROSS-CORRELATION PROCESSOR FOR BLIND SAMPLING RATE OFFSET ESTIMATION IN ACOUSTIC SENSOR NETWORKS

- Log in to post comments

Signal synchronization in wireless acoustic sensor networks requires an accurate estimation of the sampling rate offset (SRO) inevitably present in signals acquired by sensors of ad-hoc networks. Although some sophisticated methods for blind SRO estimation have been recently proposed in this very young field of research, there is still a need for the development of new ideas and concepts especially regarding robust approaches with low computational complexity.

- Categories:

48 Views

48 Views

- Read more about CONTROL ARCHITECTURE OF THE DOUBLE-CROSS-CORRELATION PROCESSOR FOR SAMPLING-RATE-OFFSET ESTIMATION IN ACOUSTIC SENSOR NETWORKS

- Log in to post comments

Distributed hardware of acoustic sensor networks bears inconsistency of local sampling frequencies, which is detrimental to signal processing. Fundamentally, sampling rate offset (SRO) nonlinearly relates the discrete-time signals acquired by different sensor nodes. As such, retrieval of SRO from the available signals requires nonlinear estimation, like double-cross-correlation processing (DXCP), and frequently results in biased estimation. SRO compensation by asynchronous sampling rate conversion (ASRC) on the signals then leaves an unacceptable residual.

- Categories:

133 Views

133 Views

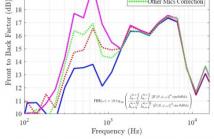

- Read more about Reducing Modal Error Propagation Through Correcting Mismatched Microphone Gains Using RAPID

- Log in to post comments

Microphone array calibration is required to accurately capture the information in an audio source recording. Existing calibration methods require expensive hardware and setup procedures to compute filters for correcting microphone responses. Typically, such methods struggle to extend measurement accuracy to low frequencies. As a result, the error due to microphone gain mismatch propagates to all the modes in the spherical harmonic domain representation of a signal.

- Categories:

22 Views

22 Views

This paper considers the problem of estimating K angle of arrivals (AoA) using an array of M > K microphones. We assume the source signal is human voice, hence unknown to the receiver. Moreover, the signal components that arrive over K spatial paths are strongly correlated since they are delayed copies of the same source signal. Past works have successfully extracted the AoA of the direct path, or have assumed specific types of signals/channels to derive the subsequent (multipath) AoAs.

poster_4294.pdf

- Categories:

30 Views

30 Views

- Read more about Focusing and frequency smoothing for arbitrary arrays with application to speaker localization

- Log in to post comments

- Categories:

16 Views

16 Views