- Read more about OPTIMIZING LATENT SPACE DIRECTIONS FOR GAN-BASED LOCAL IMAGE EDITING

- Log in to post comments

LELSD ICASSP.pdf

LELSD-Poster.pdf

- Categories:

11 Views

11 Views

- Read more about Computational coherent imaging for accommodation-invariant near-eye displays

- Log in to post comments

We present a computational accommodation-invariant near-eye display, which relies on imaging with coherent light and utilizes static optics together with convolutional neural network-based preprocessing. The network and the display optics are co-optimized to obtain a depth-invariant display point spread function, and thus relieve the conflict between accommodation and ocular vergence cues that typically exists in conventional near-eye displays.

- Categories:

36 Views

36 Views

- Read more about Domain Agnostic Video Prediction from Motion Selective Kernels

- Log in to post comments

Existing conditional video prediction approaches train a network from large databases and generalise to previously unseen data. We take the opposite stance, and introduce a model that learns from the first frames of a given video and extends its content and motion, to, \eg double its length. To this end, we propose a dual network that can use in a flexible way both dynamic and static convolutional motion kernels, to predict future frames. We demonstrate experimentally the robustness of our approach on challenging videos in-the-wild and show that it is competitive related baselines.

- Categories:

24 Views

24 Views

Image collections, if critical aspects of image content are exposed, can spur research and practical applications in many domains. Supervised machine learning may be the only feasible way to annotate very large collections. However, leading approaches rely on large samples of completely and accurately annotated images. In the case of a large forensic collection that we are aiming to annotate, neither the complete annotation nor the large training samples can be feasibly produced. We, therefore, investigate ways to assist manual annotation efforts done by forensic experts.

- Categories:

25 Views

25 Views

- Categories:

33 Views

33 Views

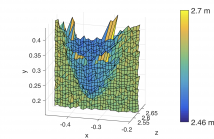

- Read more about IMPROVING LIDAR DEPTH RESOLUTION WITH DITHER

- Log in to post comments

Using detector arrays can speed up lidar systems by parallelizing acquisition.

However, current SPAD arrays have time bins longer than

typical laser pulse durations, resulting in measurement errors dominated

by quantization. We propose an optical time-of-flight system

that uses subtractive dither to improve image depth resolution.

Modeling the measurement noise with a generalized Gaussian distribution

further improves estimation error in simulations, although

model mismatch prevents the same advantage for our experimental

- Categories:

118 Views

118 Views

- Read more about A MATRIX-FREE RECONSTRUCTION METHOD FOR COMPRESSIVE FOCAL PLANE ARRAY IMAGING

- Log in to post comments

In this study, we propose a novel algorithm for compressive imaging using digital micromirror device (DMD) modulated focal plane array (FPA) data. In this setting, DMD modulates the scene in the image domain by blocking some of the pixels at a higher resolution level. For reconstruction, a regularized optimization problem is solved, whereas reconstruction time is crucial for a practical compressive sensing application.

- Categories:

16 Views

16 Views

- Read more about MULTI-EXPOSURE FUSION WITH CNN FEATURES

- Log in to post comments

- Categories:

29 Views

29 Views