DCC 2021Virtual Conference - The Data Compression Conference (DCC) is an international forum for current work on data compression and related applications. Both theoretical and experimental work are of interest. Visit DCC 2021 website.

- Read more about Multiscale Point Cloud Geometry Compression

- Log in to post comments

Recent years have witnessed the growth of point cloud based applications for both immersive media as well as 3D sensing for auto-driving, because of its realistic and fine-grained representation of 3D objects and scenes. However, it is a challenging problem to compress sparse, unstructured, and high-precision 3D points for efficient communication. In this paper, leveraging the sparsity nature of the point cloud, we propose a multiscale end-to-end learning framework that hierarchically reconstructs the 3D Point Cloud Geometry (PCG) via progressive re-sampling.

- Categories:

806 Views

806 Views

- Read more about SLFC: Scalable Light Field Coding

- Log in to post comments

Light field imaging enables some post-processing capabilities like refocusing, changing view perspective, and depth estimation. As light field images are represented by multiple views they contain a huge amount of data that makes compression inevitable. Although there are some proposals to efficiently compress light field images, their main focus is on encoding efficiency. However, some important functionalities such as viewpoint and quality scalabilities, random access, and uniform quality distribution have not been addressed adequately.

- Categories:

73 Views

73 Views

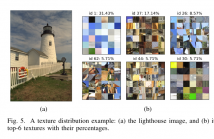

- Read more about JQF: Optimal JPEG Quantization Table Fusion by Simulated Annealing on Texture Images and Predicting Textures

- Log in to post comments

JPEG has been a widely used lossy image compression codec for nearly three decades. The JPEG standard allows to use customized quantization table; however, it's still a challenging problem to find an optimal quantization table within acceptable computational cost. This work tries to solve the dilemma of balancing between computational cost and image specific optimality by introducing a new concept of texture mosaic images.

- Categories:

71 Views

71 Views

- Categories:

106 Views

106 Views

- Read more about Deep Scattering Network with Max-Pooling

- Log in to post comments

- Categories:

32 Views

32 Views

- Read more about On Universal Codes for Integers: Wallace Tree, Elias Omega and Beyond

- 2 comments

- Log in to post comments

- Categories:

87 Views

87 Views

- Read more about An Efficient QP Variable Convolutional Neural Network Based In-loop Filter for Intra Coding

- Log in to post comments

In this paper, a novel QP variable convolutional neural network based in-loop filter is proposed for VVC intra coding. To avoid training and deploying multiple networks, we develop an efficient QP attention module (QPAM) which can capture compression noise levels for different QPs and emphasize meaningful features along channel dimension. Then we embed QPAM into the residual block, and based on it, we design a network architecture that is equipped with controllability for different QPs.

- Categories:

61 Views

61 Views

- Read more about PHONI: Streamed Matching Statistics with Multi-Genome References

- Log in to post comments

Computing the matching statistics of patterns with respect to a text is a fundamental task in bioinformatics, but a formidable one when the text is a highly compressed genomic database. Bannai et al. gave an efficient solution for this case, which Rossi et al. recently implemented, but it uses two passes over the patterns and buffers a pointer for each character during the first pass. In this paper, we simplify their solution and make it streaming, at the cost of slowing it down slightly.

dcc21phoni.s.pdf

- Categories:

95 Views

95 Views

- Read more about A Disk-Based Index for Trajectories with an In-Memory Compressed Cache

- Log in to post comments

We present a representation of trajectories moving through the space without any constraint. It combines an in-memory cached index based on compact data structures and a classic disk-based strategy. The first structure allows some loss of precision that is refined with the second component. This approach reduces the number of accesses to disk. Comparing it with a classical index like the MVR-tree, this structure obtains competitive times in queries like time slice and knn, and sharply outperforms it in time interval queries.

dcc2021.pdf

- Categories:

31 Views

31 Views

- Read more about Video Enhancement Network Based on Max-pooling and Hierarchical Feature Fusion

- Log in to post comments

In this paper, we propose an efficient convolution neural network to enhance the quality of video compressed by HEVC standard. The model is composed of a max-pooling module and a hierarchical feature fusion module. The max-pooling module extracts feature from different scales and enlarges the receptive field of the model without stacking too many convolution layers. And the hierarchical feature fusion module accurately aligns features from different scales and fuses them efficiently.

DCCPPT.pdf

- Categories:

55 Views

55 Views