The International Conference on Image Processing (ICIP), sponsored by the IEEE Signal Processing Society, is the premier forum for the presentation of technological advances and research results in the fields of theoretical, experimental, and applied image and video processing. ICIP has been held annually since 1994, brings together leading engineers and scientists in image and video processing from around the world. Visit website.

- Read more about CANNYGAN: Edge-PREserving image translation with disentangled features

- Log in to post comments

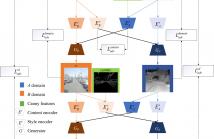

The image-to-image translation task often associates with the problem of missing texture and edge information. In this paper, we proposed a framework to translate images while preserving more realistic textures and details. To this end, we disentangle the samples into shared content space and domain-specific style domain. Then, according to the blurred outlines and textures in the source domain, we introduce the classic canny edge detection algorithm to encode the boundary and edge information in the content latent space.

- Categories:

61 Views

61 Views

- Read more about RANGE IMAGE BASED POINT CLOUD COLORIZATION USING CONDITIONAL GENERATIVE MODEL

- Log in to post comments

Nowadays, three-dimensional (3D) point cloud has been an emerging medium to represent real-world scenes and objects. However, there is a considerable proportion of point clouds whose color attribute information is not captured during the acquisition process due to the device or environment limitations. This poses a great challenge for efficient management and utilization of point clouds. To address this problem, we introduce an automatic colorization scheme based on a deep generative network for 3D point clouds.

- Categories:

53 Views

53 Views

- Read more about Recognizing Material of Covered Object: A Case Study with Graffiti

- Log in to post comments

Recognizing materials using image analysis is a classic problem. However, little research has been done with the images which have visual impediments such as noise, obstacle, or painting. This paper introduces the problem of recognizing covered materials which are distorted visually (e.g., materials covered by graffiti).

- Categories:

31 Views

31 Views

This document includes the slides of the ICIP2019 presentation of the publication "DVDnet: A Fast Network for Deep Video Denoising".

- Categories:

148 Views

148 Views

- Read more about EFFICIENT FINE-TUNING OF NEURAL NETWORKS FOR ARTIFACT REMOVAL IN DEEP LEARNING FOR INVERSE IMAGING PROBLEMS

- Log in to post comments

While Deep Neural Networks trained for solving inverse imaging problems (such as super-resolution, denoising, or inpainting tasks) regularly achieve new state-of-the-art restoration performance, this increase in performance is often accompanied with undesired artifacts generated in their solution. These artifacts are usually specific to the type of neural network architecture, training, or test input image used for the inverse imaging problem at hand. In this paper, we propose a fast, efficient post-processing method for reducing these artifacts.

- Categories:

32 Views

32 Views

- Read more about Computing Vessel Velocity from Single Perspective Projection Images

- Log in to post comments

We present an image-based approach to estimate the velocity of moving vessels from their traces on the water surface. Vessels moving at constant heading and speed display a familiar V-shaped pattern which only differs from one to another by the wavelength of their transverse and divergent components. Such wavelength is related to vessel velocity. We use planar homography and natural constraints on the geometry of ships’ wake crests to compute vessel velocity from single optical images acquired by conventional cameras.

- Categories:

43 Views

43 Views

- Read more about CLUSTERING IMAGES BY UNMASKING - A NEW BASELINE

- Log in to post comments

- Categories:

23 Views

23 Views