- DSP algorithm implementation in hardware and software

- Compilers and tools for DSP implementation

- Algorithm and architecture co-optimization

- Programmable and reconfigurable DSP architectures

- Low-power signal processing techniques and architectures

- System-on-chip architectures for signal processing

- Read more about Complexity Analysis Of Next-Generation VVC Encoding and Decoding

- Log in to post comments

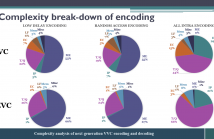

While the next generation video compression standard, Versatile Video Coding (VVC), provides a superior compression efficiency, its computational complexity dramatically increases. This paper thoroughly analyzes this complexity for both encoder and decoder of VVC Test Model 6, by quantifying the complexity break-down for each coding tool and measuring the complexity and memory requirements for VVC encoding/decoding.

- Categories:

136 Views

136 Views

- Read more about Application Informed Motion Signal Processing for Finger Motion Tracking Using Wearable Sensors

- Log in to post comments

- Categories:

65 Views

65 Views

- Read more about Soft-Output Finite Alphabet Equalization for mmWave Massive MIMO

- Log in to post comments

- Categories:

22 Views

22 Views

A Turing machine is a model describing the fundamental limits of any realizable computer, digital signal processor (DSP), or field programmable gate array (FPGA). This paper shows that there exist very simple linear time-invariant (LTI) systems which can not be simulated on a Turing machine. In particular, this paper considers the linear system described by the voltage-current relation of an ideal capacitor. For this system, it is shown that there exist continuously differentiable and computable input signals such that the output signal is a continuous function which is not computable.

- Categories:

38 Views

38 Views

- Read more about Slides of my paper in ICASSP2020: Greedy Hybrid Rate Adaptation in Dynamic Wireless Communication environment

- Log in to post comments

- Categories:

41 Views

41 Views

- Read more about Accelerating Linear Algebra Kernels on a Massively Parallel Reconfigurable Architecture

- Log in to post comments

- Categories:

232 Views

232 Views

- Read more about Denoising Deep Boltzmann Machines: Compression for Deep Learning

- Log in to post comments

- Categories:

45 Views

45 Views

- Read more about Exploring Energy Efficient Quantum-resistant Signal Processing Using Array Processors

- Log in to post comments

Quantum computers threaten to break public-key cryptography schemes such as DSA and ECDSA in polynomial time, which poses an imminent threat to secure signal processing.

Ring learning with error (RLWE) lattice-based cryptography (LBC) is one of the most promising families of post-quantum cryptography (PQC) schemes in terms of efficiency and versatility. Two conventional methods to compute polynomial multiplication, the most compute-intensive routine in the RLWE schemes, are convolutions and Number Theoretic Transform (NTT).

- Categories:

23 Views

23 Views

- Read more about Exploration Methodology for BTI-Induced Failures on RRAM-Based Edge AI Systems

- Log in to post comments

Resistive switching memory technologies (RRAM) are seen by most of the scientific community as an enabler for Edge-level applications such as embedded deep Learning, AI or signal processing of audio and video signals. However, going beyond a ``simple'' replacement of eFlash in micro-controller and introducing RRAM inside the memory hierarchy is not a straightforward move. Indeed, integrating a RRAM technology inside the cache hierarchy requires higher endurance requirement than for eFlash replacement, and thus necessitates relaxed programming conditions.

ICASSP_VF.pdf

- Categories:

36 Views

36 Views

- Read more about STOCHASTIC DATA-DRIVEN HARDWARE RESILIENCE TO EFFICIENTLY TRAIN INFERENCE MODELS FOR STOCHASTIC HARDWARE IMPLEMENTATIONS

- Log in to post comments

Machine-learning algorithms are being employed in an increasing range of applications, spanning high-performance and energy-constrained platforms. It has been noted that the statistical nature of the algorithms can open up new opportunities for throughput and energy efficiency, by moving hardware into design regimes not limited to deterministic models of computation. This work aims to enable high accuracy in machine-learning inference systems, where computations are substantially affected by hardware variability.

- Categories:

54 Views

54 Views