- Read more about Visual Coding for Humans and Machines

- Log in to post comments

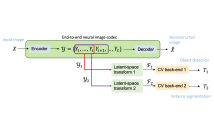

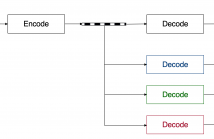

Visual content is increasingly being used for more than human viewing. For example, traffic video is automatically analyzed to count vehicles, detect traffic violations, estimate traffic intensity, and recognize license plates; images uploaded to social media are automatically analyzed to detect and recognize people, organize images into thematic collections, and so on; visual sensors on autonomous vehicles analyze captured signals to help the vehicle navigate, avoid obstacles, collisions, and optimize their movement.

- Categories:

410 Views

410 Views

- Read more about Multi-Task Image and Video Compression

- Log in to post comments

Visual content is increasingly being used for more than human viewing. For example, traffic video is automatically analyzed to count vehicles, detect traffic violations, estimate traffic intensity, and recognize license plates; images uploaded to social media are automatically analyzed to detect and recognize people, organize images into thematic collections, and so on; visual sensors on autonomous vehicles analyze captured signals to help the vehicle navigate, avoid obstacles, collisions, and optimize their movement.

- Categories:

342 Views

342 Views

- Read more about A NOVEL STATE CONNECTION STRATEGY FOR QUANTUM COMPUTING TO REPRESENT AND COMPRESS DIGITAL IMAGES

- 2 comments

- Log in to post comments

Quantum image processing draws a lot of attention due to faster data computation and storage compared to classical data processing systems. Converting classical image data into the quantum domain and state label preparation complexity is still a challenging issue. The existing techniques normally connect the pixel values and the state position directly. Recently, the EFRQI (efficient flexible representation of the quantum image) approach uses an auxiliary qubit that connects the pixel-representing qubits to the state position qubits via Toffoli gates to reduce state connection.

- Categories:

25 Views

25 Views

- Read more about Adaptive and Scalable Compression of Multispectral Images using VVC

- Log in to post comments

The VVC codec is applied to the task of multispectral image (MSI) compression using adap- tive and scalable coding structures. In a “plain” VVC approach, concepts from picture-to- picture temporal prediction are employed for decorrelation along the MSI’s spectral dimen- sion. The popular principle component analysis (PCA) for spectral decorrelation is further evaluated in combination with VVC intra-coding for spatial decorrelation. This approach is referred to as PCA-VVC.

- Categories:

53 Views

53 Views

- Read more about Semantically Adaptive JND Modeling with Object-wise Feature Characterization and Cross-object Interaction

- Log in to post comments

- Categories:

44 Views

44 Views

- Read more about Entropy Coding Improvement for Low-complexity Compressive Auto-encoders

- Log in to post comments

dcc_poster.pdf

- Categories:

55 Views

55 Views

- Read more about Multiscale convolutional neural networks for in-loop video restoration

- Log in to post comments

In this paper, we consider using a multiscale approach to reduce complexity while maintaining coding efficiency. Experimental results demonstrate a 5.4× reduction in MAC operations while achieving an average bit rate savings of 6.4% and 6.3% for all intra and random access coding, respectively, when compared to the evolving AV2 standard. Ablation studies are also provided and show that the approach achieves all but 0.2% of the coding efficiency of full resolution processing.

- Categories:

89 Views

89 Views

Deep variational autoencoders for image and video compression have gained significant attraction

in the recent years, due to their potential to offer competitive or better compression

rates compared to the decades long traditional codecs such as AVC, HEVC or VVC. However,

because of complexity and energy consumption, these approaches are still far away

from practical usage in industry. More recently, implicit neural representation (INR) based

codecs have emerged, and have lower complexity and energy usage to classical approaches at

- Categories:

88 Views

88 Views