ICIP 2021 - The International Conference on Image Processing (ICIP), sponsored by the IEEE Signal Processing Society, is the premier forum for the presentation of technological advances and research results in the fields of theoretical, experimental, and applied image and video processing. ICIP has been held annually since 1994, brings together leading engineers and scientists in image and video processing from around the world. Visit website.

- Read more about An Efficient Compression Method For Sign Information Of DCT Coefficients Via Sign Retrieval

- Log in to post comments

Compression of the sign information of discrete cosine transform coefficients is an intractable problem in image compression schemes due to the equiprobable occurrence of the sign bits. To overcome this difficulty, we propose an efficient compression method for such sign information based on phase retrieval, which is a classical signal restoration problem attempting to find the phase information of discrete Fourier transform coefficients from their magnitudes.

- Categories:

37 Views

37 Views

- Read more about TEST-TIME ADAPTATION FOR OUT-OF-DISTRIBUTED IMAGE INPAINTING

- Log in to post comments

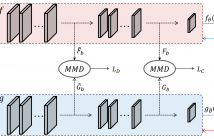

Deep-learning-based image inpainting algorithms have shown great performance via powerful learned priors from numerous external natural images. However, they show unpleasant results for test images whose distributions are far from those of the training images because their models are biased toward the training images. In this paper, we propose a simple image inpainting algorithm with test-time adaptation named AdaFill. Given a single out-of-distributed test image, our goal is to complete hole region more naturally than the pre-trained inpainting models.

- Categories:

31 Views

31 Views

- Read more about Semantic-Preserving Metric Learning for Video-Text Retrieval (Poster)

- Log in to post comments

- Categories:

11 Views

11 Views

Generative Adversarial Networks (GANs) have been used recently for anomaly detection from images, where the anomaly scores are obtained by comparing the global difference between the input and generated image. However, the anomalies often appear in local areas of an image scene, and ignoring such information can lead to unreliable detection of anomalies.

- Categories:

20 Views

20 Views

- Read more about Blockwise Temporal-Spatial Pathway Network

- Log in to post comments

- Categories:

15 Views

15 Views

- Read more about IMAGE DEBLURRING BASED ON LIGHTWEIGHT MULTI-INFORMATION FUSION NETWORK

- Log in to post comments

Recently, deep learning based image deblurring has been well

developed. However, exploiting the detailed image features in a

deep learning framework always requires a mass of parameters,

which inevitably makes the network suffer from high computational

burden. To solve this problem, we propose a lightweight multi-

information fusion network (LMFN) for image deblurring. The

proposed LMFN is designed as an encoder-decoder architecture. In

the encoding stage, the image feature is reduced to various small-

- Categories:

27 Views

27 Views

- Read more about poster--ROBUST VISUAL OBJECT TRACKING WITH SPATIOTEMPORAL REGULARISATION AND DISCRIMINATIVE OCCLUSION DEFORMATION

- Log in to post comments

Spatiotemporal regularized Discriminative Correlation Filters (DCF) have been proposed recently for visual tracking, achieving state-of-the-art performance. However, the tracking performance of the online learning model used in this kind methods is highly dependent on the quality of the appearance feature of the target, and the target feature appearance could be heavily deformed due to the occlusion by other objects or the variations in their dynamic self-appearance. In this paper, we propose a new approach to mitigate these two kinds of appearance deformation.

- Categories:

15 Views

15 Views

- Read more about M3VSNet: Unsupervised Multi-metric Multi-view Stereo Network

- Log in to post comments

The present Multi-view stereo (MVS) methods with supervised learning-based networks have an impressive performance comparing with traditional MVS methods. However, the ground-truth depth maps for training are hard to be obtained and are within limited kinds of scenarios. In this paper, we propose a novel unsupervised multi-metric MVS network, named M^3VSNet, for dense point cloud reconstruction without any supervision.

slide1091.pdf

- Categories:

73 Views

73 Views

- Read more about A Consensual Collaborative Learning Method for Remote Sensing Image Classification under Noisy Multi-Labels

- Log in to post comments

Collecting a large number of reliable training images annotated by multiple land-cover class labels in the framework of multi-label classification is time-consuming and costly in remote sensing (RS). To address this problem, publicly available thematic products are often used for annotating RS images with zero-labeling-cost. However, such an approach may result in constructing a training set with noisy multi-labels, distorting the learning process. To address this problem, we propose a Consensual Collaborative Multi-Label Learning (CCML) method.

- Categories:

20 Views

20 Views