ICIP 2020 is a fully virtual conference. The International Conference on Image Processing (ICIP), sponsored by the IEEE Signal Processing Society, is the premier forum for the presentation of technological advances and research results in the fields of theoretical, experimental, and applied image and video processing. ICIP has been held annually since 1994, brings together leading engineers and scientists in image and video processing from around the world. Visit website.

- Read more about Improving PSNR-Based Quality Metrics Performance for Point Cloud Geometry

- Log in to post comments

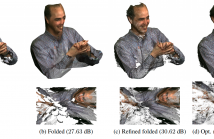

An increased interest in immersive applications has drawn attention to emerging 3D imaging representation formats, notably light fields and point clouds (PCs). Nowadays, PCs are one of the most popular 3D media formats, due to recent developments in PC acquisition, namely depth sensors and signal processing algorithms. To obtain high fidelity 3D representations of visual scenes a huge amount of PC data is typically acquired, which demands efficient compression solutions.

- Categories:

53 Views

53 Views

Existing techniques to compress point cloud attributes leverage either geometric or video-based compression tools. We explore a radically different approach inspired by recent advances in point cloud representation learning. Point clouds can be interpreted as 2D manifolds in 3D space. Specifically, we fold a 2D grid onto a point cloud and we map attributes from the point cloud onto the folded 2D grid using a novel optimized mapping method. This mapping results in an image, which opens a way to apply existing image processing techniques on point cloud attributes.

- Categories:

51 Views

51 Views

- Read more about Optimal Measurement Budget Allocation for Particle Filtering

- Log in to post comments

Particle filtering is a powerful tool for target tracking. When the budget for observations is restricted, it is necessary to reduce the measurements to a limited amount of samples carefully selected. A discrete stochastic nonlinear dynamical system is studied over a finite time horizon. The problem of selecting the optimal measurement times for particle filtering is formalized as a combinatorial optimization problem. We propose an approximated solution based on the nesting of a genetic algorithm, a Monte Carlo algorithm and a particle filter.

- Categories:

26 Views

26 Views

- Read more about Super-resolution of 3D MRI corrupted by heavy noise with the Median Filter Transform

- Log in to post comments

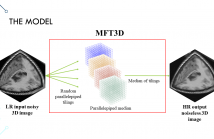

The acquisition of 3D MRIs is adversely affected by many degrading factors including low spatial resolution and noise. Image enhancement techniques are commonplace, but there are few proposals that address the increase of the spatial resolution and noise removal at the same time. An algorithm to address this vital need is proposed in this presented work. The proposal tiles the 3D image space into parallelepipeds, so that a median filter is applied in each parallelepiped. The results obtained from several such tilings are then combined by a subsequent median computation.

- Categories:

20 Views

20 Views

- Read more about NOVEL VIEW SYNTHESIS WITH SKIP CONNECTIONS

- Log in to post comments

Novel view synthesis is the task of synthesizing an image of an object at an arbitrary viewpoint given one or a few views of the object. The output image of novel view synthesis exhibits a significant structural change from the input. Because of the large change, the skip connections or U-Net architecture, which can sustain the multi-level characteristics of the input images, cannot be directly utilized for the novel view synthesis. In this paper, we investigate several variations of skip connection on two widely used novel view synthesis modules, pixel generation and flow prediction.

- Categories:

31 Views

31 Views

- Read more about Real-time semantic background subtraction

- Log in to post comments

Semantic background subtraction (SBS) has been shown to improve the performance of most background subtraction algorithms by combining them with semantic information, derived from a semantic segmentation network. However, SBS requires high-quality semantic segmentation masks for all frames, which are slow to compute. In addition, most state-of-the-art background subtraction algorithms are not real-time, which makes them unsuitable for real-world applications.

1545.pdf

- Categories:

67 Views

67 Views

- Read more about 3D OBJECT DETECTION USING TEMPORAL LIDAR DATA

- Log in to post comments

- Categories:

63 Views

63 Views

- Read more about CSIOR:An Algorithm for Ordered Triangular Mesh Regularization

- Log in to post comments

3D scanners generate irregularly distributed cloud of points in

most of the cases. Dealing with such data, often in the form of

triangular meshes, requires a pre-processing step to regularize

the triangle facets shape and size. In this paper, we propose

CSIOR, a novel mesh regularization technique which is capable

of producing quasi-equilateral triangles, and distinguished

by two novel features, namely, its intrinsic ordered aspect and

its preservation of the geometric texture of the surface (relief

- Categories:

49 Views

49 Views